The Clinical Illusion

When “Clinically Proven” Isn’t What You Think

This is something I’ve been wanting to write for a while. And I’m finally seated, ready to spill the tea.

Clinicals. Clinicals. Clinicals.

You see it in brand bios. You hear it in every major beauty store. You walk past glossy shelves promising “clinical results.” And naturally, you think: well studied. Well researched. Backed by science. Backed by evidence. Rigorously tested.

However.

Did you know that many “clinical” claims are not what you think they are?

I don’t make the rules. I just read the fine print. As a longtime beauty enthusiast and someone with a background in biochemistry currently building her own skincare brand, I feel a responsibility to clarify something that gets blurred in marketing every day.

Let’s use Rhode as a case study.

Not because I have anything against the brand. I actually use several Rhode products regularly. I’m using Rhode as an example because it is a highly visible, well funded, industry backed, and widely trusted brand.

On their “Barrier Butter” product page, you’ll see language like “clinically proven hydration.” That phrasing signals authority. It suggests measured change. Instrument based validation.

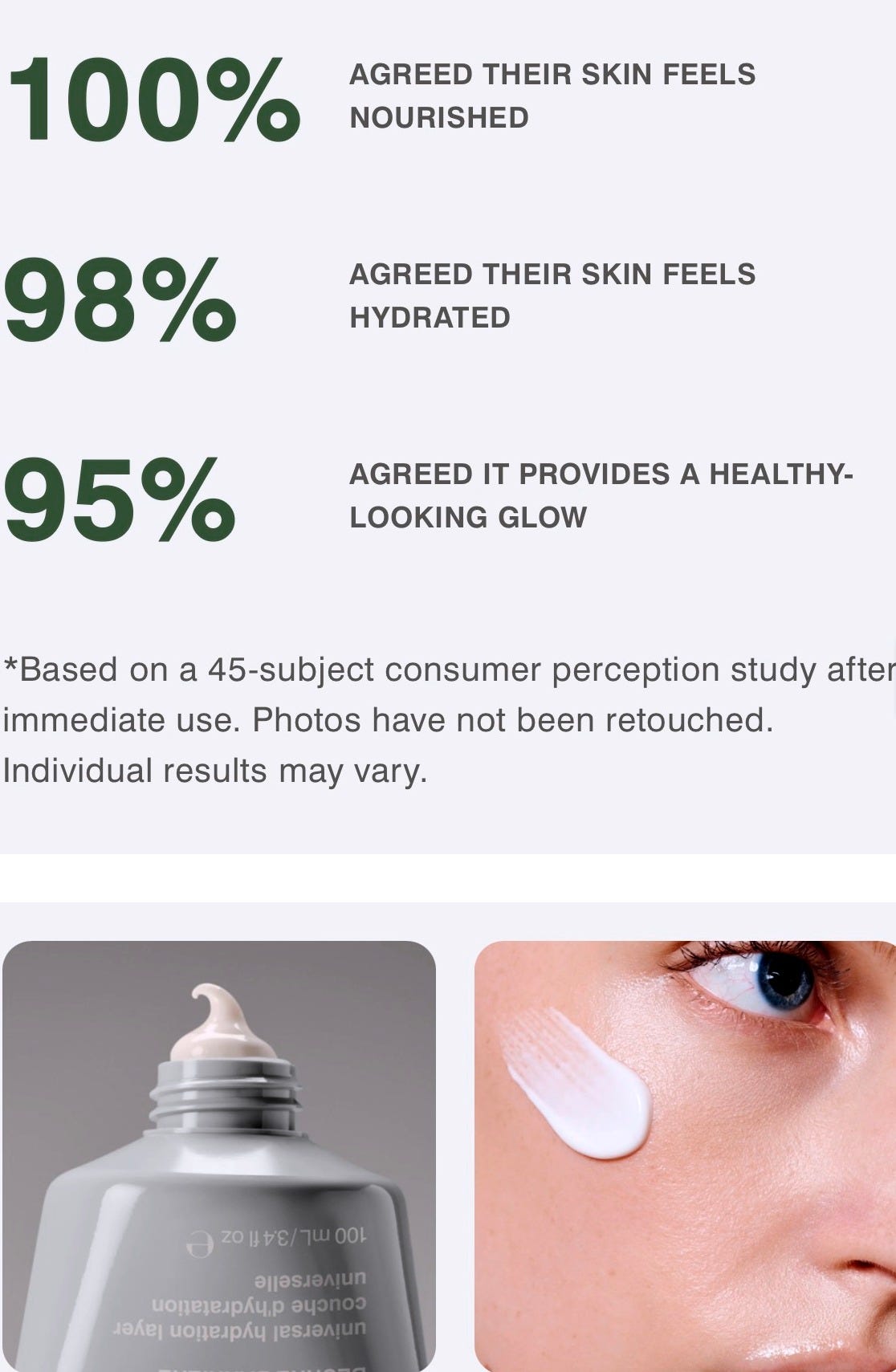

But when you examine the supporting text, the methodology is not always clearly distinguished. “Real Results” stands prominently, yet the underlying data references consumer perception testing (CPT) rather than disclosed instrumental measurement.

Meaning: participants used the product under defined conditions and reported how they felt.

The truth is, that is different from instrument measured clinical testing. It’s different from measuring hydration with a Corneometer.

A CPT is essentially a structured survey. Participants follow usage instructions and answer prepared questions about hydration, smoothness, irritation, and satisfaction.

There is nothing inherently wrong with this. We are currently in the process of conducting one through a third party. When done properly, it can be blinded, third party administered, and ethically structured to add objectivity.

But let’s be clear.

Consumer perception testing measures experience. Instrumental clinical testing measures physiological change. Both have value, but they are not interchangeable.

The issue is not that Rhode conducts CPTs. Many brands do. I will. That is not the concern.

The concern is when “clinical results” language strongly implies objective laboratory measurement while the fine print reveals perception based reporting. Interestingly, on some lip product pages, clinical studies and consumer perception tests are labeled more distinctly. Yet the clinical studies lack disclosed instrumentation, percentages tied to measurement tools, or methodological clarity.

Which raises questions about consistency.

If a brand can distinguish between instrumental data and perception data in one context, why allow “clinically proven” to function as a broader umbrella elsewhere without upfront transparency? Perhaps it is industry shorthand. Perhaps the assumption is that consumers do not need that detail. But as someone with a research background who reads beyond headlines, ambiguity isn’t satisfying.

When I see “clinically proven,” I want to know:

Was this instrument measured?

Evaluator graded?

Self-reported?

Statistically significant?

The word “clinical” carries scientific weight. It borrows credibility from research rigor. Most consumers do not understand the difference between:

“100% said their skin felt more hydrated” and “Skin hydration increased by X% as measured by instrumentation.”

Those statements mean very different things. Perception-based results aren’t invalid, but they require clear framing. Whether regulations allow broad terminology is secondary. What ultimately matters is how consumers interpret those claims.

When language implies measurement without disclosing the nature of that measurement, it creates a perception of rigor that may exceed what was conducted. Legally, this often falls within regulatory boundaries. Ethically, the distinction deserves transparency, especially from brands operating at Rhode’s scale. If the data is perception based, lead with that. If it is instrument measured, lead with that.

If we are going to invoke the authority of science in beauty, which many brands increasingly do, we must respect the responsibility that comes with that language. When you are selling performance, terminology matters. Claims matter. Framing matters. Scientific integrity matters. Period.

During my undergraduate research years, I saw how quickly trust in science fractures when data is manipulated or loosely framed. We were studying diseases that change families forever. It defined my standard for scientific discipline.

Now more than ever, rebuilding that trust requires a radical return to rigor—not broader language or implication, but clarity without compromise and precision without exception.

So when I see language stretched for marketing impact, even in something as low stakes as skincare, I pay attention. At this point, you might be wondering: What does a more rigorous cosmetic clinical look like?

Controlled environmental conditions.

Standardized washout periods.

Defined inclusion and exclusion criteria.

Instrument based measurements.

Statistical analysis.

Even then, cosmetic clinicals are small in scale and industry sponsored. They are not pharmaceutical drug trials. But they are categorically different from asking participants how something felt. That distinction is the point. Not all clinical claims are created equal. Not all percentages measure the same thing. Not all “proven” statements mean what you assume they mean.

Read the fine print.

Ask what was measured.

Ask how it was measured.

Ask who measured it.

Transparency is not anti-marketing. It is elevated marketing. If the beauty industry wants to invoke science, it must honor its discipline.

🔬sensitive ilayda